Data virtualization eliminates the need to replicate data, which is an expensive and time-consuming process.Īlso see: What Is Data Virtualization? ETL Use Cases Instead, data is accessed via a virtual data layer. For example, data virtualization is the process of aggregating data across sources without actually moving the data to a new warehouse. For example, users can pull historical reports or real-time data to support strategic decisions.Īlternatives to the ETL process do exist. Enterprises can then use the data in a number of ways. Once the transformed data is loaded into a database, it can then be accessed by data analysis tools and other software. The loading process can be completed all at once or over time, depending on an enterprise’s needs and data management capabilities. Without this step, the data is completely unreliable.Īlso see: What Is Data Analytics? Your Guide to Data Analytics Loadĭuring the load phase, the transformed data is loaded into a data warehouse. The transformation stage is critical for ensuring that high-quality and accurate data is all that fills the database. Data can also be sorted based on type, filtered, and manipulated in other ways. For example, one method is deduplication, which involves the removal of multiple identical data entries.Īnother method is cleaning, where data is scoured to remove inaccurate values and other anomalies. This process can be completed in a number of ways. Transformĭuring the transform phase, data is transformed into a specific format. Data will need to be transformed before it can be used, which occurs during the next step. Keeping the data separate from the endpoint is critical for ensuring data quality. Once the data is extracted, it is then moved to a single repository separate from the desired data warehouse.

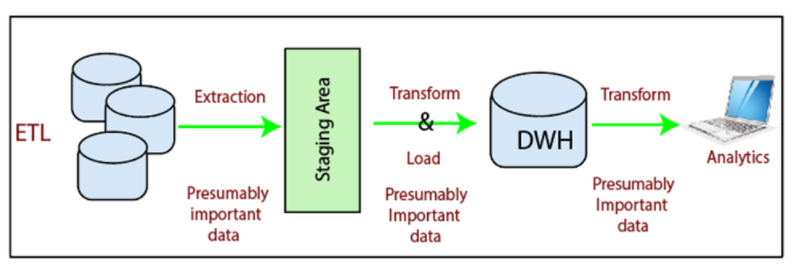

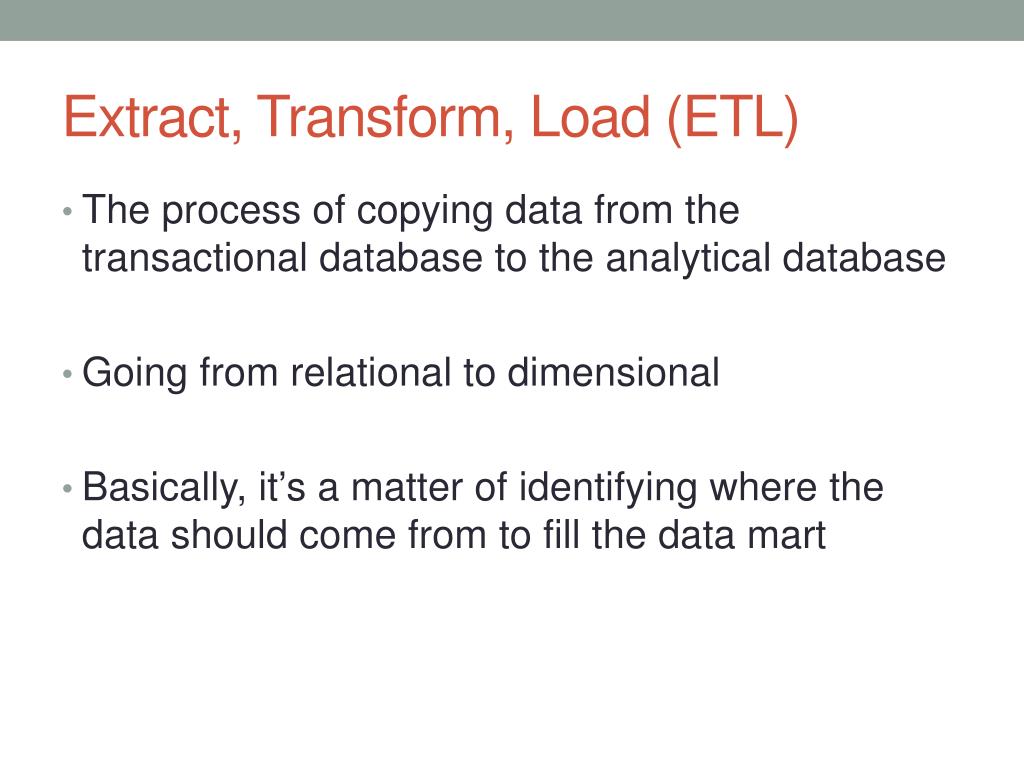

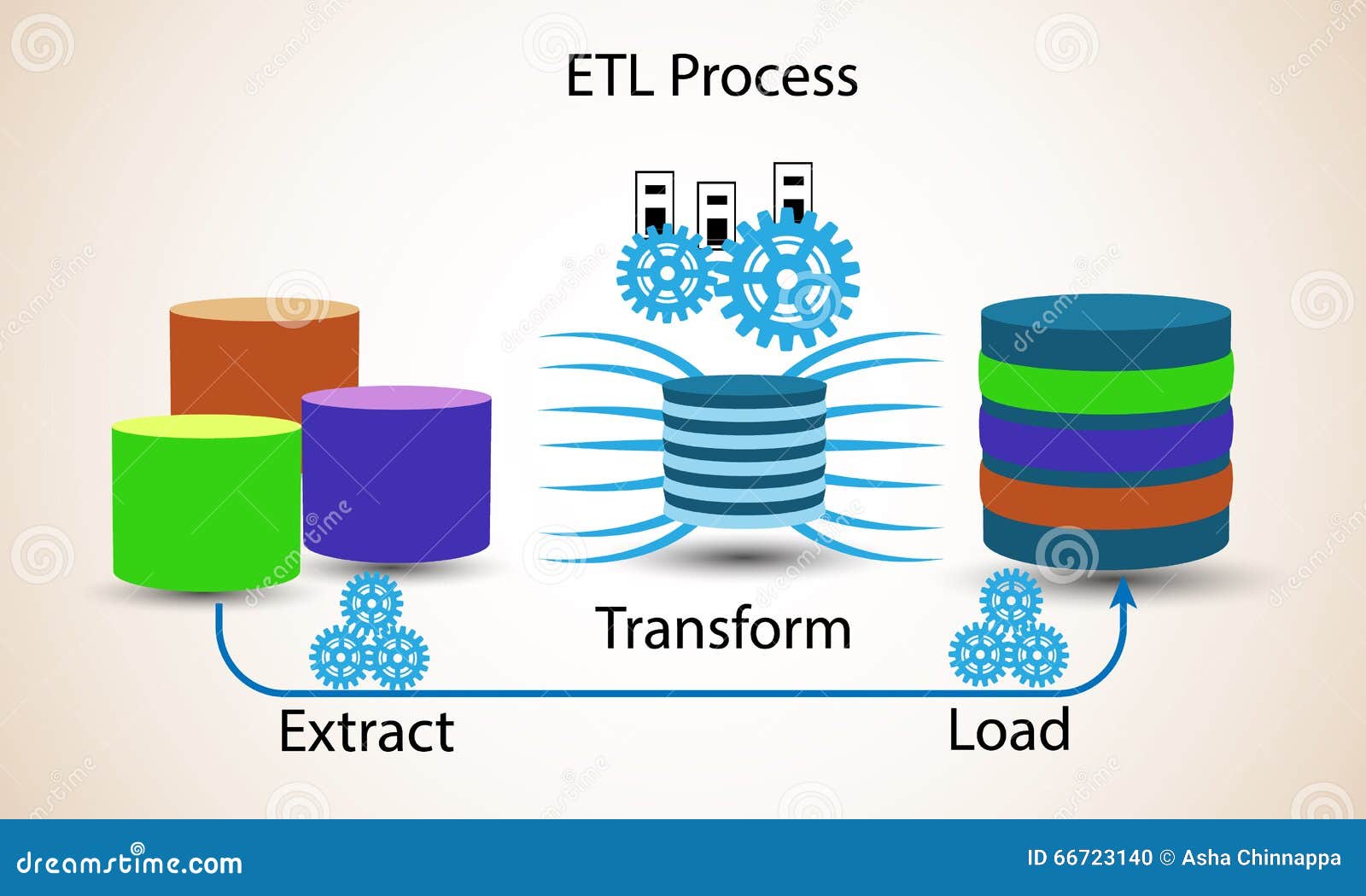

These sources may include everything from software to relational databases to flat files. Extractĭuring the extract phase, data is extracted from all of its various sources. Let’s dive further into each step of the process. This process of extracting data from various sources, transforming it through various methods, and loading it into a data warehouse i s typically carried out through the use of software tools.

This process, known as ETL (extract, transform, load), is critical for enterprises ready to reap the benefits of high-quality data analytics.Īlso see: Do the Benefits of a Data Warehouse Outweigh the Cost? The Extract, Transform, Load (ETL) Process Yet, to be moved, data must be extracted from its original source, transformed into something valuable, and then loaded into the data warehouse. To use this data to its fullest potential, it must be moved into one centralized location, also called a data warehouse. The data also comes in many forms, such as structured and unstructured. And while a lack of talent and resistance to change may be driving forces behind the struggle, another factor must be considered: the sheer number of data sources.ĭata comes from a wide range of places: an enterprise’s CRM, social media, customer purchases, and marketing campaigns are only a few examples. And while this data could be a treasure trove of strategic insights, many enterprises struggle to make sense of it all.Īccording to a recent survey, 76% of organizations are still struggling to understand their data.

Some background: Each day, companies collect massive amounts of data. Learn More.Įxtract, transform, load (ETL) is the process of extracting data from various sources, transforming it through various methods, and loading it into a data warehouse or data lake. We may make money when you click on links to our partners. EWEEK content and product recommendations are editorially independent.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed